Redesigning exponential technologies landscape with AI & Blockchain fusion

Add Your Heading Text Here

AI and blockchain are two of the prime drivers in the technology space that catalyze the pace of innovation and demonstrating radical shifts across every industry. Each of this technical venture comes with a degree of technical complexity and business implications. Fusion of the two will be able to redesign the entire technical landscape along with a human effect from scratch.

Blockchain has its own limitations, it is a mix of technology-related and culture influence from the financial services sector, but most of them can be conceited by AI in a way or another.

The illustrated points below will be able to give a gist of the potentials that can be realized at the intersection of AI and Blockchain:

Energy consumption in mining: Mining has already proven that it requires tons of energy and is heavy in the economic perspective. AI has mastered in optimizing energy consumption across multiple sectors, similar results can be expected for the blockchain as well. AI can dramatically reduce the costs of maintaining servers and validate potential savings to lower investments in mining hardware.

Federated Learning: Blockchain is growing at a steady pace of 1MB every 10 minutes. Blockchain pruning is a possible solution through AI. A new decentralized learning system such as federated learning, for example, or new data sharing techniques to make the system more efficient.

Security: Concerns still exist on the security system of built-in layers and applications for Blockchain (e.g., the DAO, Bitfinex, etc.). The mileage created by machine learning in the last two years makes AI a solid candidate for the blockchain to guarantee secure applications deployment, especially given the fixed structure of the system.

Blockchain-AI Data gates: Blockchain has proven its ability for record keeping, authentication, and execution while AI drives decisions by assessing/understanding patterns and datasets, ultimately engendering autonomous interaction. The combo (AI and blockchain) will be become a data gate with these several characteristics that will ensure a seamless interaction in the nearest future.

Auditing of AI through blockchain: AI is seen as a black box ( complex set of calculations and algorithms) to distinguish patterns or trends. This makes it a difficult task for the humans to govern the choices taken by the artificial intelligence in yielding results. Accountability of the AI black box is seen as biggest challenge, considering concerns across the community for tampering or the altering happening to the calculations for the given input which eventually reflects in the output generated. This challenge can be easily comprehended by the blockchain innovation. Implementing robust auditing of these calculations utilizing the blockchain is seen as the biggest driver for enhancing the credibility of the business organizations and reinstating trust in the reliability of the information.

Leverage on Artificial Trust: Future roadmap of this fusion can successfully lead into creation of virtual agents that will create new ledger by themselves. Machine to machine interaction will be the new norm reinstating trust in a secure way to share data and coordinate decisions, as well as a robust mechanism to reach a quorum.

Machine performance monitoring and changes: Blockchain miners (companies and individuals) pour an incredible amount of money into specialized hardware components. AI can complement such as machine/equipment monitoring to deploy more efficient systems and do away with the unproductive heavy ones.

Blockchain for better information management: AI has a proven mechanism that runs of an incorporated or centralized database. In such a case, there are always chances for information occurrence of a mishap, i.e. gets lost, altered, or undermined.

Blockchain and artificial intelligence fusion can eliminate the above concern. Under the umbrella of blockchain the data is decentralized and stored within different nodes or systems. This reinstates trust on that your information is safe and unaltered. Most importantly the information is time-stamped and is in the sequence making recuperation less demanding and exact.

Some key challenges on the block: The fusion throws open technical and ethical implications arising from the interaction between these two technologies, such as the need to edit data on a blockchain and most importantly the duo pushing to become data hoarder. Experimentations alone will be able to provide a detailed answer on these lines.

In conclusion blockchain and AI are the two sides of the technology spectrum. One efficiently fosters centralized intelligence while the other promotes decentralized applications in an open-data environment. The fusion of the two will be an intelligent way to amplify positive externalities and advance mankind, most importantly reap the maximum potential for business needs.

Related Posts

AIQRATIONS

Beating Back Cyber Attacks with Analytics – A Topical Perspective

Add Your Heading Text Here

The worldwide cyber attack that began last Friday and goes by the name of “WannaCry” has highlighted the need for governments and businesses to strengthen their security infrastructure, in addition to calling attention to the need to mandate security updates and educate lawmakers about the intricacies of cyber security.

During the WannaCry attacks, hospitals had to turn away patients, and their ability to provide care was altered significantly. Even though the threat is widely acknowledged to be real by the information security community and anyone not living under a rock, and the stakes are higher than ever, most organizations and almost all healthcare providers are still using old-school cybersecurity technologies and retain their reactive security postures.

The WannaCry ransomware attack moved too quickly for security teams to respond, but a few organizations were able to spot the early indicators of the ransomware and contain it before the infection spread across their networks. While it wreaked havoc across the globe, there was nothing subtle about it. All of the signs of highly abnormal behavior on the networks were there, but the pace of the attack was far beyond the capacity of human teams contain it. The latest generation of AI technology enabled those few organizations to defend their networks at the first sign of threat.

Meanwhile, threats of similar – or perhaps worse – attacks have continued to surface. This was not the big one. This was a precursor of a far worse attack that will inevitably strike — and it is likely, unfortunately, that [the next] attack will not have a kill switch. This is an urgent call for action for all of us to get the fundamentals finally in place to enable us to withstand robustly this type of a crisis situation when the next one hits.

Modern malware is now almost exclusively polymorphic and designed in such a way as to spread immediately upon intrusion into a network, infecting every sub-net and system it encounters in near real-time speed. Effective defense systems have to be able to respond to these threats in real time and take on an active reconnaissance posture to seek out these attacks during the infiltration phase. We now have defense systems that have applied artificial intelligence and advanced machine learning techniques and are able to detect and eradicate these new forms of malware before they become fully capable of executing a breach, but their adoption has not matched the early expectations.

As of today, the vast majority of businesses and institutions have not adopted nor installed these systems and they remain at high risk. The risk is exacerbated further by targets that are increasingly involved with life or death outcomes like hospitals and medical centers. All of the new forms of ransomware and extortionware will increasingly be aimed at high-leverage opportunities like insulin pumps, defibrillators, drug delivery systems and operating room robotics.

Network behavioral analytics that leverage artificial intelligence can stop malware like WannaCry and all of its strains before it can form into a breach. And new strains are coming. In fact, by the time this is published, it would not surprise me to see a similar attack in the headlines.

Aanlytics is Turning the Table on Security Threats

The more comprehensive, sensitive and greater volume of end user and customer data you store, the more tempting you are to someone wanting to do harm. That said, the same data attracting the threat can be used to thwart an attack. Analytics includes all events, activities, actions, and occurrences associated with a threat or attack:

- User: authentication and access location, access date and time, user profiles, privileges, roles, travel and business itineraries, activity behaviors, normal working hours, typical data accessed, application usage

- Device: type, software revision, security certificates, protocols

- Network: locations, destinations, date and time, new and non-standard ports, code installation, log data, activity and bandwidth

- Customer: customer database, credit/debit card numbers, purchase histories, authentication, addresses, personal data

- Content: documents, files, email, application availability, intellectual property

The more log data you amass, the greater the opportunity to detect, diagnose and protect an organization from cyber-attacks by identifying anomalies within the data and correlating them to other events falling outside of expected behaviors, indicating a potential security breach. The challenge lies in analyzing large amounts of data to uncover unexpected patterns in a timely manner. That’s where analytics comes into play.

Leveraging Data Science & Analytics to Catch a Thief

Using data science, organizations can exercise real-time monitoring of network and user behaviors, identifying suspicious activity as it occurs. Organizations can model various network, user, application and service profiles to create intelligence-driven security measures capable of quickly identifying anomalies and correlating events indicating a threat or attack:

- Traffic anomalies to, from or between data warehouses

- Suspicious activity in high value or sensitive resources of your data network

- Suspicious user behaviors such as varied access times, levels, location, information queries and destinations

- Newly installed software or different protocols used to access sensitive information

- Identify ports used to aggregate traffic for external offload of data

- Unauthorized or dated devices accessing a network

- Suspicious customer transactions

Analytics can be highly effective in identifying an attack not quite underway or recommending an action to counter an attack, thus minimizing or eliminating losses. Analytics makes use of large sets of data with timely analysis of disparate events to thwart both the smallest and largest scale attacks.

The Analytics Solution to Security Monitoring

If security monitoring is a data storage problem, then it requires a analytics solution capable of analyzing large amounts of data in real time. The natural place to look for that solution is within Apache Hadoop, and the ecosystem of dependent technologies. But although Hadoop does a good job performing analytics on large amounts of data, it was developed to provide batch analysis, not real-time streaming analytics required to detect security threats.

In contrast, the solution for real-time streaming analytics is Apache Storm, a free and open source real-time computation system. Storm functions similar to Hadoop, but was developed for real-time analytics. Storm is fast and scalable, supporting not only real-time analytics but machine learning as well, necessary to reduce the number of false positives found in security monitoring. Storm is commonly found in cloud solutions supporting antivirus programs, where large amounts of data is analyzed to identify threats, supporting quick data processing and anomaly detection.

The key is real-time analysis. Big data contains the activities and events signaling a potential threat, but it takes real-time analytics to make it an effective security tool, and the statistical analysis of data science tools to prevent security breaches.

When do you need to start? – Yesterday

Yesterday would have been a good time for companies and institutions to arm themselves against this pandemic. Tomorrow will be too late.

Related Posts

AIQRATIONS

Ethics and Ethos in Analytics – Why is it Imperative for Enterprises to Keep Winning the Trust from Customers?

Add Your Heading Text Here

Data Sciences and analytics technology can reap huge benefits to both individuals and organizations – bringing personalized service, detection of fraud and abuse, efficient use of resources and prevention of failure or accident. So why are there questions being raised about the ethics of analytics, and its superset, Data Sciences?

Ethical Business Processes in Analytics Industry

At its core, an organization is “just people” and so are its customers and stakeholders. It will be individuals who choose what to organization does or does not do and individuals who will judge its appropriateness. As an individual, our perspective is formed from our experience and the opinions of those we respect. Not surprisingly, different people will have different opinions on what is appropriate use of Data Sciences and analytics technology particularly – so who decides which is “right”? Customers and stakeholders may have different opinions on to the organization about what is ethical.

This suggests that organizations should be thoughtful in their use of this Analytics; consulting widely and forming policies that record the decisions and conclusions they have come to. They will consider the wider implications of their activities including:

Context – For what purpose was the data originally surrendered? For what purpose is the data now being used? How far removed from the original context is its new use? Is this appropriate?

Consent & Choice – What are the choices given to an affected party? Do they know they are making a choice? Do they really understand what they are agreeing to? Do they really have an opportunity to decline? What alternatives are offered?

Reasonable – Is the depth and breadth of the data used and the relationships derived reasonable for the application it is used for?

Substantiated – Are the sources of data used appropriate, authoritative, complete and timely for the application?

Owned – Who owns the resulting insight? What are their responsibilities towards it in terms of its protection and the obligation to act?

Fair – How equitable are the results of the application to all parties? Is everyone properly compensated? Considered – What are the consequences of the data collection and analysis?

Access – What access to data is given to the data subject?

Accountable – How are mistakes and unintended consequences detected and repaired? Can the interested parties check the results that affect them?

Together these facets are called the ethical awareness framework. This framework was developed by the UK and Ireland Technical Consultancy Group (TCG) to help people to develop ethical policies for their use of analytics and Data Sciences. Examples of good and bad practices are emerging in the industry and in time they will guide regulation and legislation. The choices we make, as practitioners will ultimately determine the level of legislation imposed around the technology and our subsequent freedom to pioneer in this exciting emerging technical area.

Designing Digital Business for Transparency and Trust

With the explosion of digital technologies, companies are sweeping up vast quantities of data about consumers’ activities, both online and off. Feeding this trend are new smart, connected products—from fitness trackers to home systems—that gather and transmit detailed information.

Though some companies are open about their data practices, most prefer to keep consumers in the dark, choose control over sharing, and ask for forgiveness rather than permission. It’s also not unusual for companies to quietly collect personal data they have no immediate use for, reasoning that it might be valuable someday.

In a future in which customer data will be a growing source of competitive advantage, gaining consumers’ confidence will be key. Companies that are transparent about the information they gather, give customers control of their personal data, and offer fair value in return for it will be trusted and will earn ongoing and even expanded access. Those that conceal how they use personal data and fail to provide value for it stand to lose customers’ goodwill—and their business.

At the same time, consumers appreciate that data sharing can lead to products and services that make their lives easier and more entertaining, educate them, and save them money. Neither companies nor their customers want to turn back the clock on these technologies—and indeed the development and adoption of products that leverage personal data continue to soar. The consultancy Gartner estimates that nearly 5 billion connected “things” will be in use in 2015—up 30% from 2014—and that the number will quintuple by 2020.

Resolving this tension will require companies and policy makers to move the data privacy discussion beyond advertising use and the simplistic notion that aggressive data collection is bad. We believe the answer is more nuanced guidance—specifically, guidelines that align the interests of companies and their customers, and ensure that both parties benefit from personal data collection

Understanding the “Privacy Sensitiveness” of Customer Data

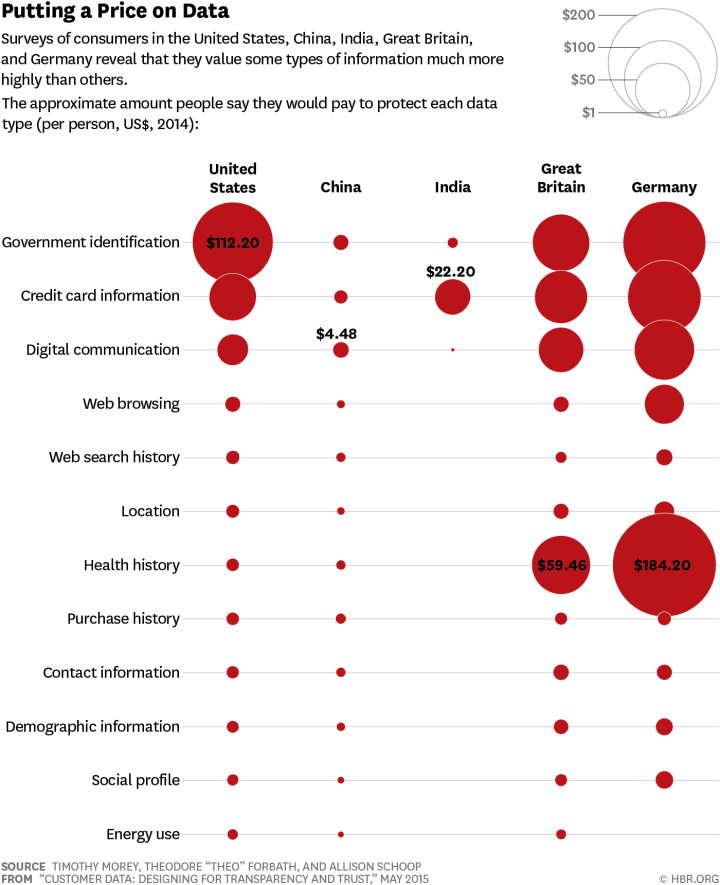

Keeping track of the “privacy sensitiveness” of customer data is also crucial as data and its privacy are not perfectly black and white. Some forms of data tend to be more crucial for customers to protect and maintained private. To see how much consumers valued their data , a conjoint analysis was performed to determine what amount survey participants would be willing to pay to protect different types of information. Though the value assigned varied widely among individuals, we are able to determine, in effect, a median, by country, for each data type.

The responses revealed significant differences from country to country and from one type of data to another. Germans, for instance, place the most value on their personal data, and Chinese and Indians the least, with British and American respondents falling in the middle. Government identification, health, and credit card information tended to be the most highly valued across countries, and location and demographic information among the least.

This spectrum doesn’t represents a “maturity model,” in which attitudes in a country predictably shift in a given direction over time (say, from less privacy conscious to more). Rather, our findings reflect fundamental dissimilarities among cultures. The cultures of India and China, for example, are considered more hierarchical and collectivist, while Germany, the United States, and the United Kingdom are more individualistic, which may account for their citizens’ stronger feelings about personal information.

Adopting Data Aggregation Paradigm for Protecting Privacy

If companies want to protect their users and data they need to be sure to only collect what’s truly necessary. An abundance of data doesn’t necessarily mean that there is an abundance of useable data. Keeping data collection concise and deliberate is key. Relevant data must be held in high regard in order to protect privacy.

It’s also important to keep data aggregated in order to protect privacy and instill transparency. Algorithms are currently being used for everything from machine thinking and autonomous cars, to data science and predictive analytics. The algorithms used for data collection allow companies to see very specific patterns and behavior in consumers all while keeping their identities safe.

One way companies can harness this power while heeding privacy worries is to aggregate their data…if the data shows 50 people following a particular shopping pattern, stop there and act on that data rather than mining further and potentially exposing individual behavior.

Things are getting very interesting…Google, Facebook, Amazon, and Microsoft take the most private information and also have the most responsibility. Because they understand data so well, companies like Google typically have the strongest parameters in place for analyzing and protecting the data they collect.

Finally, Analyze the Analysts

Analytics will increasingly play a significant role in the integrated and global industries today, where individual decisions of analytics professionals may impact the decision making at the highest levels unimagined years ago. There’s a substantial risk at hand in case of a wrong, misjudged model / analysis / statistics that can jeopardize the proper functioning of an organization.

Instruction, rules and supervisions are essential but that alone cannot prevent lapses. Given all this, it is imperative that Ethics should be deeply ingrained in the analytics curriculum today. I believe, that some of the tenets of this code of ethics and standards in analytics and data science should be:

- These ethical benchmarks should be regardless of job title, cultural differences, or local laws.

- Places integrity of analytics profession above own interests

- Maintains governance & standards mechanism that data scientists adhere to

- Maintain and develop professional competence

- Top managers create a strong culture of analytics ethics at their firms, which must filter throughout their entire analytics organization